Moz Q&A is closed.

After more than 13 years, and tens of thousands of questions, Moz Q&A closed on 12th December 2024. Whilst we’re not completely removing the content - many posts will still be possible to view - we have locked both new posts and new replies. More details here.

Are Collapsible DIV's SEO-Friendly?

-

When I have a long article about a single topic with sub-topics I can make it user friendlier when I limit the text and hide text just showing the next headlines, by using expandable-collapsible div's.

My doubt is if Google is really able to read onclick textlinks (with javaScript) or if it could be "seen" as hidden text?

I think I read in the SEOmoz Users Guide, that all javaScript "manipulated" contend will not be crawled. So from SEOmoz's Point of View I should better make use of old school named anchors and a side-navigation to jump to the sub-topics?

(I had a similar question in my post before, but I did not use the perfect terms to describe what I really wanted. Also my text is not too long (<1000 Words) that I should use pagination with rel="next" and rel="prev" attributes.)

THANKS for every answer

-

Expandable and collapible DIV's are just fine for SEO. They do a great job of accomplishing great design without compromsing content for SEO. Yes, Google can crawl that content just fine. Here's a link to a great webinar that specifically addresses some great Pro-Tips regarding how best to use these: http://www.seomoz.org/webinars/designing-for-seo

Hope that helps!

Dana

Got a burning SEO question?

Subscribe to Moz Pro to gain full access to Q&A, answer questions, and ask your own.

Browse Questions

Explore more categories

-

Moz Tools

Chat with the community about the Moz tools.

-

SEO Tactics

Discuss the SEO process with fellow marketers

-

Community

Discuss industry events, jobs, and news!

-

Digital Marketing

Chat about tactics outside of SEO

-

Research & Trends

Dive into research and trends in the search industry.

-

Support

Connect on product support and feature requests.

Related Questions

-

Collapsible sections - content

**Hi,****I am looking to improve the aesthetics of some pages on my website by adding written content into collapsible tabs. I was wondering whether the content that is ‘hidden’ by tabs is given less weight by Google from the perspective of SEO? **Some articles I have read suggest that tabbed content is weighted equally with the content which is already immediately visible to the user, but others suggest that this may not be the case. **Please, can I request opinions on the matter? Any advice would be greatly appreciated, many thanks.**Katarina

Technical SEO | | Katarina-Borovska0 -

What's the best way for users to upload their images to my wordpress site to promote UGC

I have looked at lots of different plugins and wanted a recommendation for an easy way for patients of ours to upload pictures of them out partying and having fun and looking beautiful so future users can see the final results instead of sometimes gory or difficult to understand before and after images. I'd like to give them the opportunity to write captions (like facebook or insta posts and would offer them incentives to do so. I don't want it to be too complicated for them or have too many steps or barriers but I do want it to look nice and slick and modern. Also do you think this would have a positive impact on SEO? I was also thinking of a Q&A app where dentists could get Q&A emails and respond - i've been doing AMA sessions and they've been really successful and I would like to bring it into out site and make it native. Thanks in advance 🙂

Technical SEO | | Smileworks_Liverpool1 -

If I'm using a compressed sitemap (sitemap.xml.gz) that's the URL that gets submitted to webmaster tools, correct?

I just want to verify that if a compressed sitemap file is being used, then the URL that gets submitted to Google, Bing, etc and the URL that's used in the robots.txt indicates that it's a compressed file. For example, "sitemap.xml.gz" -- thanks!

Technical SEO | | jgresalfi0 -

Spam URL'S in search results

We built a new website for a client. When I do 'site:clientswebsite.com' in Google it shows some of the real, recently submitted pages. But it also shows many pages of spam url results, like this 'clientswebsite.com/gockumamaso/22753.htm' - all of which then go to the sites 404 page. They have page titles and meta descriptions in Chinese or Japanese too. Some of the urls are of real pages, and link to the correct page, despite having the same Chinese page titles and descriptions in the SERPS. When I went to remove all the spammy urls in Search Console (it only allowed me to temporarily hide them), a whole load of new ones popped up in the SERPS after a day or two. The site files itself are all fine, with no errors in the server logs. All the usual stuff...robots.txt, sitemap etc seems ok and the proper pages have all been requested for indexing and are slowly appearing. The spammy ones continue though. What is going on and how can I fix it?

Technical SEO | | Digital-Murph0 -

Does Title Tag location in a page's source code matter?

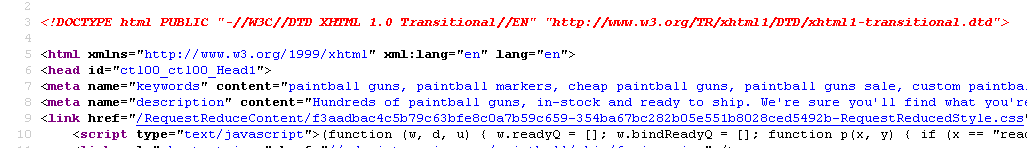

Currently our meta description is on line 8 for our page - http://www.paintball-online.com/Paintball-Guns-And-Markers-0Y.aspx

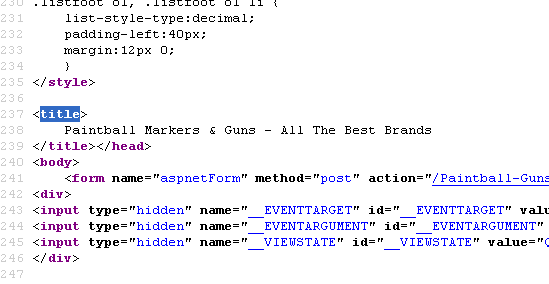

Technical SEO | | Istoresinc The title tag, however sits below a bunch of code on line 237

The title tag, however sits below a bunch of code on line 237

Does the location of the title tag, meta tags, and any structured data have any influence with respect to SEO and search engines? Put another way, could we benefit from moving the title tag up to the top?

I "surfed 'n surfed" and could not find any articles about this.

I would really appreciate any help on this as our site got decimated organically last May and we are looking for any help with SEO.

NIck

0

Does the location of the title tag, meta tags, and any structured data have any influence with respect to SEO and search engines? Put another way, could we benefit from moving the title tag up to the top?

I "surfed 'n surfed" and could not find any articles about this.

I would really appreciate any help on this as our site got decimated organically last May and we are looking for any help with SEO.

NIck

0 -

Structuring URL's for better SEO

Hello, We were rolling our fresh urls for our new service website. Currently we have our structure as www.practo.com/health/dental/clinic/bangalore We like to have it as www.practo.com/health/dental-clinic-bangalore Can someone advice us better which one of the above structure would work out better and why? Should this be a focus of attention while going ahead since this is like a search engine platform for patients looking out for actual doctors. Thanks, Aditya

Technical SEO | | shanky10 -

Javascript to manipulate Google's bounce rate and time on site?

I was referred to this "awesome" solution to high bounce rates. It is suppose to "fix" bounce rates and lower them through this simple script. When the bounce rate goes way down then rankings dramatically increase (interesting study but not my question). I don't know javascript but simply adding a script to the footer and watch everything fall into place seems a bit iffy to me. Can someone with experience in JS help me by explaining what this script does? I think it manipulates the reporting it does to GA but I'm not sure. It was supposed to be placed in the footer of the page and then sit back and watch the dollars fly in. 🙂

Technical SEO | | BenRWoodard1 -

Iframes & SEO

I've got a client that wants a site with all content in iFrames. They saw another site they liked & asked if we could do it. Of course we can technically. How big a negative hit would they take with SEO? Is there anything we can do to mitigate it, such as redirects, etc? Thanks for the help!

Technical SEO | | wcksmith0